diff options

Diffstat (limited to 'content/docs/tutorials')

| -rw-r--r-- | content/docs/tutorials/_index.md | 5 | ||||

| -rw-r--r-- | content/docs/tutorials/dev-tut-1.md | 73 | ||||

| -rw-r--r-- | content/docs/tutorials/ovpn-tut.md | 217 | ||||

| -rw-r--r-- | content/docs/tutorials/tutorial-1.md | 153 | ||||

| -rw-r--r-- | content/docs/tutorials/tutorial-2.md | 298 | ||||

| -rw-r--r-- | content/docs/tutorials/tutorial-3.md | 210 | ||||

| -rw-r--r-- | content/docs/tutorials/tutorial-4.md | 123 |

7 files changed, 0 insertions, 1079 deletions

diff --git a/content/docs/tutorials/_index.md b/content/docs/tutorials/_index.md deleted file mode 100644 index b35d0b8..0000000 --- a/content/docs/tutorials/_index.md +++ /dev/null @@ -1,5 +0,0 @@ ---- -title: "Ouroboros Tutorials" -date: 2019-06-22 -draft: false ----

\ No newline at end of file diff --git a/content/docs/tutorials/dev-tut-1.md b/content/docs/tutorials/dev-tut-1.md deleted file mode 100644 index ceac8b6..0000000 --- a/content/docs/tutorials/dev-tut-1.md +++ /dev/null @@ -1,73 +0,0 @@ ---- -title: "Developer tutorial 1: Writing your first Ouroboros C program" -draft: false ---- - -This tutorial will guide you to write your first ouroboros program. It -will use the basic Ouroboros IPC Application Programming Interface. It -will has a client and a server that send a small message from the client -to the server. - -We will explain how to connect two applications. The server application -uses the flow_accept() call to accept incoming connections and the -client uses the flow_alloc() call to connect to the server. The -flow_accept and flow_alloc call have the following definitions: - -``` -int flow_accept(qosspec_t * qs, const struct timespec * timeo); -int flow_alloc(const char * dst, qosspec_t * qs, const struct -timespec * timeo); -``` - -On the server side, the flow_accept() call is a blocking call that will -wait for an incoming flow from a client. On the client side, the -flow_alloc() call is a blocking call that allocates a flow to *dst*. -Both calls return an non-negative integer number describing a "flow -descriptor", which is very similar to a file descriptor. On error, they -will return a negative error code. (See the [man -page](/man/man3/flow_alloc.html) for all details). If the *timeo* -parameter supplied is NULL, the calls will block indefinitely, otherwise -flow_alloc() will return -ETIMEDOUT when the time interval provided by -*timeo* expires. We are working on implementing non-blocking versions if -the provided *timeo* is 0. - -After the flow is allocated, the flow_read() and flow_write() calls -are used to read from the flow descriptor. They operate just like the -read() and write() POSIX calls. The default behaviour is that these -calls will block. To release the resource, the flow can be deallocated -using flow_dealloc. - -``` -ssize_t flow_write(int fd, const void * buf, size_t count); -ssize_t flow_read(int fd, void * buf, size_t count); int -flow_dealloc(int fd); -``` - -So a very simple application would just need a couple of lines of code -for both the server and the client: - -``` -/* server side */ -char msg[BUF_LEN]; -int fd = flow_accept(NULL, NULL); -flow_read(fd, msg, BUF_LEN); -flow_dealloc(fd); - -/* client side */ -char * msg = "message"; -int fd = flow_alloc("server", NULL, NULL); -flow_write(fd, msg, strlen(msg)); -flow_dealloc(fd); -``` - -The full code for an example is the -[oecho](/cgit/ouroboros/tree/src/tools/oecho/oecho.c) -application in the tools directory. - -To compile your C program from the command line, you have to link --lourobos-dev. For instance, in the Ouroboros repository, you can do - -``` -cd src/tools/oecho -gcc -louroboros-dev oecho.c -o oecho -```

\ No newline at end of file diff --git a/content/docs/tutorials/ovpn-tut.md b/content/docs/tutorials/ovpn-tut.md deleted file mode 100644 index 6db6812..0000000 --- a/content/docs/tutorials/ovpn-tut.md +++ /dev/null @@ -1,217 +0,0 @@ ---- -title: "Tutorial: How to create an encrypted IP tunnel" -draft: false -description: "ovpn" -date: 2019-08-31 -#type: page -draft: false ---- - -We recently added 256-bit ECDHE-AES encryption to Ouroboros (in the -_be_ branch). This tutorial shows how to create an *encrypted IP -tunnel* using the Ouroboros VPN (ovpn) tool, which exposes _tun_ -interfaces to inject Internet Protocol traffic into an Ouroboros flow. - -We'll first illustrate what's going on over an ethernet loopback -adapter and then show how to create an encrypted tunnel between two -machines connected over an IP network. - -<center> {{<figure -class="w-80" -src="/images/ovpn_tut.png">}} -</center> - -We'll create an encrypted tunnel between IP addresses 127.0.0.3 /24 and -127.0.0.8 /24, as shown in the diagram above. - -To run this tutorial, make sure that -[openssl](https://www.openssl.org) is installed on your machine(s) and -get the latest version of Ouroboros from the _be_ branch. - -``` -$ git clone --branch be https://ouroboros.rocks/git/ouroboros -$ cd ouroboros -$ mkdir build && cd build -$ cmake .. -$ make && sudo make install -``` - -# Encrypted tunnel over the loopback interface - -Open a terminal window and start ouroboros (add --stdout to log to -stdout): - -``` -$ sudo irmd --stdout -``` - -To start, the network will just consist of the loopback adapter _lo_, -so we'll create a layer _my\_layer_ consisting of a single ipcp-eth-dix -named _dix_, register the name _my\_vpn_ for the ovpn server in -_my\_layer_, and bind the ovpn binary to that name. - -``` -$ irm ipcp bootstrap type eth-dix name dix layer my_layer dev lo -$ irm reg name my_vpn layer my_layer -$ irm bind program ovpn name my_vpn -``` - -We can now start an ovpn server on 127.0.0.3. This tool requires -superuser privileges as it creates a tun device. - -``` -$ sudo ovpn --ip 127.0.0.3 --mask 255.255.255.0 -``` - -From another terminal, we can start an ovpn client to connect to the -server (which listens to the name _my\_vpn_) and pass the --crypt -option to encrypt the tunnel: - -``` -$ sudo ovpn -n my_vpn -i 127.0.0.8 -m 255.255.255.0 --crypt -``` - -The ovpn tool now created two _tun_ interfaces attached to the -endpoints of the flow, and will act as an encrypted pipe for any -packets sent to that interface: - -``` -$ ip a -... -6: tun0: <POINTOPOINT,MULTICAST,NOARP,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UNKNOWN group default qlen 500 - link/none - inet 127.0.0.3/24 scope host tun0 - valid_lft forever preferred_lft forever - inet6 fe80::f81d:9038:9358:fdf4/64 scope link stable-privacy - valid_lft forever preferred_lft forever -7: tun1: <POINTOPOINT,MULTICAST,NOARP,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UNKNOWN group default qlen 500 - link/none - inet 127.0.0.8/24 scope host tun1 - valid_lft forever preferred_lft forever - inet6 fe80::c58:ca40:5839:1e32/64 scope link stable-privacy - valid_lft forever preferred_lft forever -``` - -To test the setup, we can tcpdump one of the _tun_ interfaces, and -send some ping traffic into the other _tun_ interface. -The encrypted traffic can be shown by tcpdump on the loopback interface. -Open two more terminals: - -``` -$ sudo tcpdump -i tun1 -``` - -``` -$ sudo tcpdump -i lo -``` - -and from another terminal, send some pings into the other endpoint: - -``` -$ ping 10.10.10.1 -i tun0 -``` - -The tcpdump on the _tun1_ interface shows the ping messages arriving: - -``` -$ sudo tcpdump -i tun1 -[sudo] password for dstaesse: -tcpdump: verbose output suppressed, use -v or -vv for full protocol decode -listening on tun1, link-type RAW (Raw IP), capture size 262144 bytes -13:35:20.229267 IP heteropoda > 10.10.10.1: ICMP echo request, id 3011, seq 1, length 64 -13:35:21.234523 IP heteropoda > 10.10.10.1: ICMP echo request, id 3011, seq 2, length 64 -13:35:22.247871 IP heteropoda > 10.10.10.1: ICMP echo request, id 3011, seq 3, length 64 -``` - -while the tcpdump on the loopback shows the AES encrypted traffic that -is actually sent on the flow: - -``` -$ sudo tcpdump -i lo -tcpdump: verbose output suppressed, use -v or -vv for full protocol decode -listening on lo, link-type EN10MB (Ethernet), capture size 262144 bytes -13:35:20.229175 00:00:00:00:00:00 (oui Ethernet) > 00:00:00:00:00:00 (oui Ethernet), ethertype Unknown (0xa000), length 130: - 0x0000: 0041 0070 31f2 ae4c a03a 3e72 ec54 7ade .A.p1..L.:>r.Tz. - 0x0010: f2f3 1db4 39ce 3b62 d3ad c872 93b0 76c1 ....9.;b...r..v. - 0x0020: 4f76 b977 aa66 89c8 5c3c eedf 3085 8567 Ov.w.f..\<..0..g - 0x0030: ed60 f224 14b2 72d1 6748 b04a 84dc e350 .`.$..r.gH.J...P - 0x0040: d020 637a 6c2c 642a 214b dd83 7863 da35 ..czl,d*!K..xc.5 - 0x0050: 28b0 0539 a06e 541f cd99 7dac 0832 e8fb (..9.nT...}..2.. - 0x0060: 9e2c de59 2318 12e0 68ee da44 3948 2c18 .,.Y#...h..D9H,. - 0x0070: cd4c 58ed .LX. -13:35:21.234343 00:00:00:00:00:00 (oui Ethernet) > 00:00:00:00:00:00 (oui Ethernet), ethertype Unknown (0xa000), length 130: - 0x0000: 0041 0070 4295 e31d 05a7 f9b2 65a1 b454 .A.pB.......e..T - 0x0010: 5b6f 873f 0016 16ea 7c83 1f9b af4a 0ff2 [o.?....|....J.. - 0x0020: c2e6 4121 8bf9 1744 6650 8461 431e b2a0 ..A!...DfP.aC... - 0x0030: 94da f17d c557 b5ac 1e80 825c 7fd8 4532 ...}.W.....\..E2 - 0x0040: 11b3 4c32 626c 46a5 b05b 0383 2aff 022a ..L2blF..[..*..* - 0x0050: e631 e736 a98e 9651 e017 7953 96a1 b959 .1.6...Q..yS...Y - 0x0060: feac 9f5f 4b02 c454 7d31 e66f 2d19 3eaf ..._K..T}1.o-.>. - 0x0070: a5c8 d77f .... -13:35:22.247670 00:00:00:00:00:00 (oui Ethernet) > 00:00:00:00:00:00 (oui Ethernet), ethertype Unknown (0xa000), length 130: - 0x0000: 0041 0070 861e b65e 4227 5a42 0db4 8317 .A.p...^B'ZB.... - 0x0010: 6a75 c0c1 94d0 de18 10e9 45f3 db96 997f ju........E..... - 0x0020: 7461 2716 d9af 124d 0dd0 b6a0 e83b 95e7 ta'....M.....;.. - 0x0030: 9e5f e4e6 068f d171 727d ba25 55c7 168b ._.....qr}.%U... - 0x0040: 7aab 2d49 be53 1133 eab0 624a 5445 d665 z.-I.S.3..bJTE.e - 0x0050: ca5c 7a28 9dfa 58c2 e2fd 715d 4b87 246a .\z(..X...q]K.$j - 0x0060: f54c b8c8 5040 1c1b aba1 6107 39e7 604b .L..P@....a.9.`K - 0x0070: 5fb2 73ef -``` - -# Encrypted tunnel between two IP hosts connected to the Internet - -To create an encrypted tunnel between two Internet hosts, the same -procedure can be followed. The only difference is that we need to use -an ipcpd-udp on the end hosts connected to the ip address of the -machine, and on the client side, add the MD5 hash for that name to the -hosts file. The machines must have a port that is reachable from -outside, the default is 3435, but this can be configured using the -sport option. - -On both machines (fill in the correct IP address): - -``` -irm i b t udp n udp l my_layer ip <address> -``` - -On the server machine, bind and register the ovpn tool as above: - -``` -$ irm reg name my_vpn layer my_layer -$ irm bind program ovpn name my_vpn -``` - -On the _client_ machine, add a DNS entry for the MD5 hash for "my_vpn" -with the server IP address to /etc/hosts: - -``` -$ cat /etc/hosts -# Static table lookup for hostnames. -# See hosts(5) for details. - -... - -<server_ip> 2694581a473adbf3d988f56c79953cae - -``` - -and you should be able to create the ovpn tunnel as above. - -On the server: - -``` -$ sudo ovpn --ip 127.0.0.3 --mask 255.255.255.0 -``` - -And on the client: - -``` -$ sudo ovpn -n my_vpn -i 127.0.0.8 -m 255.255.255.0 --crypt -``` - ---- - -Changelog: - -2018-08-31: Initial version.

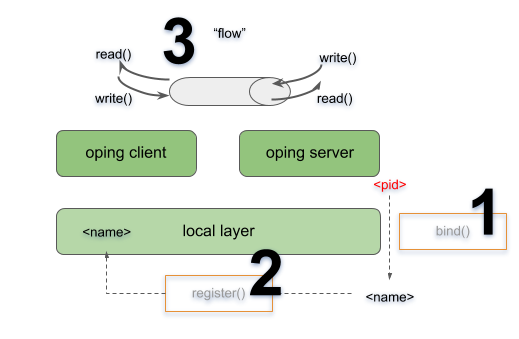

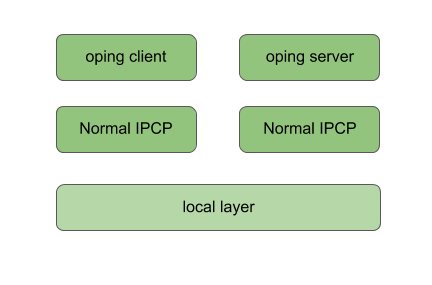

\ No newline at end of file diff --git a/content/docs/tutorials/tutorial-1.md b/content/docs/tutorials/tutorial-1.md deleted file mode 100644 index d1ac3c6..0000000 --- a/content/docs/tutorials/tutorial-1.md +++ /dev/null @@ -1,153 +0,0 @@ ---- -title: "Tutorial 1: local test" -draft: false ---- - -This tutorial runs through the basics of Ouroboros. Here, we will see -the general use of two core components of Ouroboros, the IPC Resource -Manager daemon (IRMd) and an IPC Process (IPCP). - - - -We will start the IRMd, create a local IPCP, start a ping server and -connect a client. This will involve **binding (1)** that server to a -name and **registering (2)** that name into the local layer. After that -the client will be able to **allocate a flow (3)** to that name for -which the server will respond. - -We recommend to open 3 terminal windows for this tutorial. In the first -window, start the IRMd (as a superuser) in stdout mode. The output shows -the process id (pid) of the IRMd, which will be different on your -machine. - -``` -$ sudo irmd --stdout -==02301== irmd(II): Ouroboros IPC Resource Manager daemon started\... -``` - -The type of IPCP we will create is a "local" IPCP. The local IPCP is a -kind of loopback interface that is native to Ouroboros. It implements -all the functions that the Ouroboros API provides, but only for a local -scope. The IPCP create function will instantiate a new local IPC -process, which in our case has pid 2324. The "ipcp create" command -merely creates the IPCP. At this point it is not a part of a layer. We -will also need to bootstrap this IPCP in a layer, we will name it -"local_layer". As a shortcut, the bootstrap command will -automatically create an IPCP if no IPCP by than name exists, so in this -case, the IPCP create command is optional. In the second terminal, enter -the commands: - -``` -$ irm ipcp create type local name local_ipcp -$ irm ipcp bootstrap type local name local_ipcp layer local_layer -``` - -The IRMd and ipcpd output in the first terminal reads: - -``` -==02301== irmd(II): Created IPCP 2324. -==02324== ipcpd-local(II): Bootstrapped local IPCP with pid 2324. -==02301== irmd(II): Bootstrapped IPCP 2324 in layer local_layer. -``` - -From the third terminal window, let's start our oping application in -server mode ("oping --help" shows oping command line parameters): - -``` -$ oping --listen -Ouroboros ping server started. -``` - -The IRMd will notice that an oping server with pid 10539 has started: - -``` -==02301== irmd(DB): New instance (10539) of oping added. -==02301== irmd(DB): This process accepts flows for: -``` - -The server application is not yet reachable by clients. Next we will -bind the server to a name and register that name in the -"local_layer". The name for the server can be chosen at will, let's -take "oping_server". In the second terminal window, execute: - -``` -$ irm bind proc 2337 name oping_server -$ irm register name oping_server layer local_layer -``` - -The IRMd and IPCPd in terminal one will now acknowledge that the name is -bound and registered: - -``` -==02301== irmd(II): Bound process 2337 to name oping_server. -==02324== ipcpd-local(II): Registered 4721372d. -==02301== irmd(II): Registered oping_server in local_layer as -4721372d. -``` - -Ouroboros registers name not in plaintext but using a (configurable) -hashing algorithm. The default hash is a 256 bit SHA3 hash. The output -in the logs is truncated to the first 4 bytes in a HEX notation. - -Now that we have bound and registered our server, we can connect from -the client. In the second terminal window, start an oping client with -destination oping_server and it will begin pinging: - -``` -$ oping -n oping_server -c 5 -Pinging oping_server with 64 bytes of data: - -64 bytes from oping_server: seq=0 time=0.694 ms -64 bytes from oping_server: seq=1 time=0.364 ms -64 bytes from oping_server: seq=2 time=0.190 ms -64 bytes from oping_server: seq=3 time=0.269 ms -64 bytes from oping_server: seq=4 time=0.351 ms - ---- oping_server ping statistics --- -5 SDUs transmitted, 5 received, 0% packet loss, time: 5001.744 ms -rtt min/avg/max/mdev = 0.190/0.374/0.694/0.192 ms -``` - -The server will acknowledge that it has a new flow connected on flow -descriptor 64, which will time out a few seconds after the oping client -stops sending: - -``` -New flow 64. -Flow 64 timed out. -``` - -The IRMd and IPCP logs provide some additional output detailing the flow -allocation process: - -``` -==02324== ipcpd-local(DB): Allocating flow to 4721372d on fd 64. -==02301== irmd(DB): Flow req arrived from IPCP 2324 for 4721372d. -==02301== irmd(II): Flow request arrived for oping_server. -==02324== ipcpd-local(II): Pending local allocation request on fd 64. -==02301== irmd(II): Flow on port_id 0 allocated. -==02324== ipcpd-local(II): Flow allocation completed, fds (64, 65). -==02301== irmd(II): Flow on port_id 1 allocated. -==02301== irmd(DB): New instance (2337) of oping added. -==02301== irmd(DB): This process accepts flows for: -==02301== irmd(DB): oping_server -``` - -First, the IPCPd shows that it will allocate a flow towards a -destination hash "4721372d" (truncated). The IRMd logs that IPCPd 2324 -(our local IPCPd) requests a flow towards any process that is listening -for "4721372d", and resolves it to "oping_server", as that is a -process that is bound to that name. At this point, the local IPCPd has a -pending flow on the client side. Since this is the first port_id in the -system, it has port_id 0. The server will accept the flow and the other -end of the flow gets port_id 1. The local IPCPd sees that the flow -allocation is completed. Internally it sees the endpoints as flow -descriptors 64 and 65 which map to port_id 0 and port_id 1. The IPCP -cannot directly access port_ids, they are assigned and managed by the -IRMd. After it has accepted the flow, the oping server enters -flow_accept() again. The IRMd notices the instance and reports that it -accepts flows for "oping_server". - -This concludes this first short tutorial. All running processes can be -terminated by issuing a Ctrl-C command in their respective terminals or -you can continue with the next tutorial. diff --git a/content/docs/tutorials/tutorial-2.md b/content/docs/tutorials/tutorial-2.md deleted file mode 100644 index c3925dc..0000000 --- a/content/docs/tutorials/tutorial-2.md +++ /dev/null @@ -1,298 +0,0 @@ ---- -title: "Tutorial 2: Adding a layer" -draft: false ---- - -In this tutorial we will add a __unicast layer__ on top of the local -layer. Make sure you have a [local -layer](/docs/tutorials/tutorial-1/) running. The network will look -like this: - - - -Let's start adding the unicast layer. We will first bootstrap a -unicast IPCP, with name "U1" into the layer "U" (using -default options). In terminal 2, type: - -``` -$ irm ipcp bootstrap type unicast name U1 layer U -``` - -The IRMd and IPCP will report the bootstrap: - -``` -==02301== irmd(II): Created IPCP 4363. -==04363== normal-ipcp(DB): IPCP got address 465922905. -==04363== directory(DB): Bootstrapping directory. -==04363== directory(II): Directory bootstrapped. -==04363== normal-ipcp(DB): Bootstrapped in layer normal_layer. -==02301== irmd(II): Bootstrapped IPCP 4363 in layer normal_layer. -==02301== irmd(DB): New instance (4363) of ipcpd-normal added. -==02301== irmd(DB): This process accepts flows for: -``` - -The new IPCP has pid 4363. It also generated an *address* for itself, -465922905. Then it bootstrapped a directory. The directory will map -registered names to an address or a set of addresses. In the normal DHT -the current default (and only option) for the directory is a Distributed -Hash Table (DHT) based on the Kademlia protocol, similar to the DHT used -in the mainline BitTorrent as specified by the -[BEP5](http://www.bittorrent.org/beps/bep_0005.html). This DHT will use -the hash algorithm specified for the layer (default is 256-bit SHA3) -instead of the SHA1 algorithm used by Kademlia. Just like any -Ouroboros-capable process, the IRMd will notice a new instance of the -normal IPCP. We will now bind this IPCP to some names and register them -in the local_layer: - -``` -$ irm bind ipcp normal_1 name normal_1 -$ irm bind ipcp normal_1 name normal_layer -$ irm register name normal_1 layer local_layer -$ irm register name normal_layer layer local_layer -``` - -The "irm bind ipcp" call is a shorthand for the "irm bind proc" call -that uses the ipcp name instead of the pid for convenience. Note that -we have bound the same process to two different names. This is to -allow enrollment using a layer name (anycast) instead of a specific -ipcp_name. The IRMd and local IPCP should log the following, just as -in tutorial 1: - -``` -==02301== irmd(II): Bound process 4363 to name normal_1. -==02301== irmd(II): Bound process 4363 to name normal_layer. -==02324== ipcpd-local(II): Registered e9504761. -==02301== irmd(II): Registered normal_1 in local_layer as e9504761. -==02324== ipcpd-local(II): Registered f40ee0f0. -==02301== irmd(II): Registered normal_layer in local_layer as -f40ee0f0. -``` - -We will now create a second IPCP and enroll it in the normal_layer. -Like the "irm ipcp bootstrap command", the "irm ipcp enroll" command -will create the IPCP if an IPCP with that name does not yet exist in the -system. An "autobind" option is a shorthand for binding the IPCP to -the IPCP name and the layer name. - -``` -$ irm ipcp enroll name normal_2 layer normal_layer autobind -``` - -The activity is shown by the output of the IRMd and the IPCPs. Let's -break it down. First, the new normal IPCP is created and bound to its -process name: - -``` -==02301== irmd(II): Created IPCP 13569. -==02301== irmd(II): Bound process 13569 to name normal_2. -``` - -Next, that IPCP will *enroll* with an existing member of the layer -"normal_layer". To do that it first allocates a flow over the local -layer: - -``` -==02324== ipcpd-local(DB): Allocating flow to f40ee0f0 on fd 64. -==02301== irmd(DB): Flow req arrived from IPCP 2324 for f40ee0f0. -==02301== irmd(II): Flow request arrived for normal_layer. -==02324== ipcpd-local(II): Pending local allocation request on fd 64. -==02301== irmd(II): Flow on port_id 0 allocated. -==02324== ipcpd-local(II): Flow allocation completed, fds (64, 65). -==02301== irmd(II): Flow on port_id 1 allocated. -``` - -Over this flow, it connects to the enrollment component of the normal_1 -IPCP. It sends some information that it will speak the Ouroboros -Enrollment Protocol (OEP). Then it will receive boot information from -normal_1 (the configuration of the layer that was provided when we -bootstrapped the normal_1 process), such as the hash it will use for -the directory. It signals normal_1 that it got the information so that -normal_1 knows this was successful. It will also get an address. After -enrollment is complete, both normal_1 and normal_2 will be ready to -accept incoming flows: - -``` -==13569== connection-manager(DB): Sending cacep info for protocol OEP to -fd 64. -==13569== enrollment(DB): Getting boot information. -==02301== irmd(DB): New instance (4363) of ipcpd-normal added. -==02301== irmd(DB): This process accepts flows for: -==02301== irmd(DB): normal_layer -==02301== irmd(DB): normal_1 -==04363== enrollment(DB): Enrolling a new neighbor. -==04363== enrollment(DB): Sending enrollment info (49 bytes). -==13569== enrollment(DB): Received enrollment info (49 bytes). -==13569== normal-ipcp(DB): IPCP got address 416743497. -==04363== enrollment(DB): Neighbor enrollment successful. -==02301== irmd(DB): New instance (13569) of ipcpd-normal added. -==02301== irmd(DB): This process accepts flows for: -==02301== irmd(DB): normal_2 -``` - -Now that the member is enrolled, normal_1 and normal_2 will deallocate -the flow over which it enrolled and signal the IRMd that the enrollment -was successful: - -``` -==02301== irmd(DB): Partial deallocation of port_id 0 by process -13569. -==02301== irmd(DB): Partial deallocation of port_id 1 by process 4363. -==02301== irmd(II): Completed deallocation of port_id 0 by process -2324. -==02301== irmd(II): Completed deallocation of port_id 1 by process -2324. -==02324== ipcpd-local(II): Flow with fd 64 deallocated. -==02324== ipcpd-local(II): Flow with fd 65 deallocated. -==13569== normal-ipcp(II): Enrolled with normal_layer. -==02301== irmd(II): Enrolled IPCP 13569 in layer normal_layer. -``` - -Now that normal_2 is a full member of the layer, the irm tool will -complete the autobind option and bind normal_2 to the name -"normal_layer" so it can also enroll new members. - -``` -==02301== irmd(II): Bound process 13569 to name normal_layer. -``` - - - -At this point, have two enrolled members of the normal_layer. What we -need to do next is connect them. We will need a *management flow*, for -the management network, which is used to distribute point-to-point -information (such as routing information) and a *data transfer flow* -over which the layer will forward traffic coming either from higher -layers or internal components (such as the DHT and flow allocator). They -can be established in any order, but it is recommended to create the -management network first to achieve the minimal setup times for the -network layer: - -``` -$ irm ipcp connect name normal_2 dst normal_1 comp mgmt -$ irm ipcp connect name normal_2 dst normal_1 comp dt -``` - -The IPCP and IRMd log the flow and connection establishment: - -``` -==02301== irmd(DB): Connecting Management to normal_1. -==02324== ipcpd-local(DB): Allocating flow to e9504761 on fd 64. -==02301== irmd(DB): Flow req arrived from IPCP 2324 for e9504761. -==02301== irmd(II): Flow request arrived for normal_1. -==02324== ipcpd-local(II): Pending local allocation request on fd 64. -==02301== irmd(II): Flow on port_id 0 allocated. -==02324== ipcpd-local(II): Flow allocation completed, fds (64, 65). -==02301== irmd(II): Flow on port_id 1 allocated. -==13569== connection-manager(DB): Sending cacep info for protocol LSP to -fd 64. -==04363== link-state-routing(DB): Type mgmt neighbor 416743497 added. -==02301== irmd(DB): New instance (4363) of ipcpd-normal added. -==02301== irmd(DB): This process accepts flows for: -==02301== irmd(DB): normal_layer -==02301== irmd(DB): normal_1 -==13569== link-state-routing(DB): Type mgmt neighbor 465922905 added. -==02301== irmd(II): Established Management connection between IPCP 13569 -and normal_1. -``` - -The IPCPs established a management flow between the link-state routing -components (currently that is the only component that needs a management -flow). The output is similar for the data transfer flow, however, -creating a data transfer flow triggers some additional activity: - -``` -==02301== irmd(DB): Connecting Data Transfer to normal_1. -==02324== ipcpd-local(DB): Allocating flow to e9504761 on fd 66. -==02301== irmd(DB): Flow req arrived from IPCP 2324 for e9504761. -==02301== irmd(II): Flow request arrived for normal_1. -==02324== ipcpd-local(II): Pending local allocation request on fd 66. -==02301== irmd(II): Flow on port_id 2 allocated. -==02324== ipcpd-local(II): Flow allocation completed, fds (66, 67). -==02301== irmd(II): Flow on port_id 3 allocated. -==13569== connection-manager(DB): Sending cacep info for protocol dtp to -fd 65. -==04363== dt(DB): Added fd 65 to SDU scheduler. -==04363== link-state-routing(DB): Type dt neighbor 416743497 added. -==02301== irmd(DB): New instance (4363) of ipcpd-normal added. -==02301== irmd(DB): This process accepts flows for: -==02301== irmd(DB): normal_layer -==02301== irmd(DB): normal_1 -==13569== dt(DB): Added fd 65 to SDU scheduler. -==13569== link-state-routing(DB): Type dt neighbor 465922905 added. -==13569== dt(DB): Could not get nhop for addr 465922905. -==02301== irmd(II): Established Data Transfer connection between IPCP -13569 and normal_1. -==13569== dt(DB): Could not get nhop for addr 465922905. -==13569== dht(DB): Enrollment of DHT completed. -``` - -First, the data transfer flow is added to the SDU scheduler. Next, the -neighbor's address is added to the link-state database and a Link-State -Update message is broadcast over the management network. Finally, if the -DHT is not yet enrolled, it will try to do so when it detects a new data -transfer flow. Since this is the first data transfer flow in the -network, the DHT will try to enroll. It may take some time for the -routing entry to get inserted to the forwarding table, so the DHT -re-tries a couple of times (this is the "could not get nhop" message -in the debug log). - -Our oping server is not registered yet in the normal layer. Let's -register it in the normal layer as well, and connect the client: - -``` -$ irm r n oping_server layer normal_layer -$ oping -n oping_server -c 5 -``` - -The IRMd and IPCP will log: - -``` -==02301== irmd(II): Registered oping_server in normal_layer as -465bac77. -==02301== irmd(II): Registered oping_server in normal_layer as -465bac77. -==02324== ipcpd-local(DB): Allocating flow to 4721372d on fd 68. -==02301== irmd(DB): Flow req arrived from IPCP 2324 for 4721372d. -==02301== irmd(II): Flow request arrived for oping_server. -==02324== ipcpd-local(II): Pending local allocation request on fd 68. -==02301== irmd(II): Flow on port_id 4 allocated. -==02324== ipcpd-local(II): Flow allocation completed, fds (68, 69). -==02301== irmd(II): Flow on port_id 5 allocated. -==02301== irmd(DB): New instance (2337) of oping added. -==02301== irmd(DB): This process accepts flows for: -==02301== irmd(DB): oping_server -==02301== irmd(DB): Partial deallocation of port_id 4 by process 749. -==02301== irmd(II): Completed deallocation of port_id 4 by process -2324. -==02324== ipcpd-local(II): Flow with fd 68 deallocated. -==02301== irmd(DB): Dead process removed: 749. -==02301== irmd(DB): Partial deallocation of port_id 5 by process 2337. -==02301== irmd(II): Completed deallocation of port_id 5 by process -2324. -==02324== ipcpd-local(II): Flow with fd 69 deallocated. -``` - -The client connected over the local layer instead of the normal layer. -This is because the IRMd prefers the local layer. If we unregister the -name from the local layer, the client will connect over the normal -layer: - -``` -$ irm unregister name oping_server layer local_layer -$ oping -n oping_server -c 5 -``` - -As shown by the logs (the normal IPCP doesn't log the flow allocation): - -``` -==02301== irmd(DB): Flow req arrived from IPCP 13569 for 465bac77. -==02301== irmd(II): Flow request arrived for oping_server. -==02301== irmd(II): Flow on port_id 5 allocated. -==02301== irmd(II): Flow on port_id 4 allocated. -==02301== irmd(DB): New instance (2337) of oping added. -==02301== irmd(DB): This process accepts flows for: -==02301== irmd(DB): oping_server -``` - -This concludes tutorial 2. You can shut down everything or continue with -tutorial 3. diff --git a/content/docs/tutorials/tutorial-3.md b/content/docs/tutorials/tutorial-3.md deleted file mode 100644 index 2dd0645..0000000 --- a/content/docs/tutorials/tutorial-3.md +++ /dev/null @@ -1,210 +0,0 @@ ---- -title: "Tutorial 3: IPCP statistics" -draft: false ---- - -For this tutorial, you should have a local layer, a normal layer and a -ping server registered in the normal layer. You will need to have the -FUSE libraries installed and Ouroboros compiled with FUSE support. We -will show you how to get some statistics from the network layer which is -exported by the IPCPs at /tmp/ouroboros (this mountpoint can be set at -compile time): - -``` -$ tree /tmp/ouroboros -/tmp/ouroboros/ -|-- ipcpd-normal.13569 -| |-- dt -| | |-- 0 -| | |-- 1 -| | `-- 65 -| `-- lsdb -| |-- 416743497.465922905 -| |-- 465922905.416743497 -| |-- dt.465922905 -| `-- mgmt.465922905 -`-- ipcpd-normal.4363 - |-- dt - | |-- 0 - | |-- 1 - | `-- 65 - `-- lsdb - |-- 416743497.465922905 - |-- 465922905.416743497 - |-- dt.416743497 - `-- mgmt.416743497 - -6 directories, 14 files -``` - -There are two filesystems, one for each normal IPCP. Currently, it shows -information for two components: data transfer and the link-state -database. The data transfer component lists flows on known flow -descriptors. The flow allocator component will usually be on fd 0 and -the directory (DHT). There is a single (N-1) data transfer flow on fd 65 -that the IPCPs can use to send data (these fd's will usually not be the -same). The routing component sees that data transfer flow as two -unidirectional links. It has a management flow and data transfer flow to -its neighbor. Let's have a look at the data transfer flow in the -network: - -``` -$ cat /tmp/ouroboros/ipcpd-normal.13569/dt/65 -Flow established at: 2018-03-07 18:47:43 -Endpoint address: 465922905 -Queued packets (rx): 0 -Queued packets (tx): 0 - -Qos cube 0: - sent (packets): 4 - sent (bytes): 268 - rcvd (packets): 3 - rcvd (bytes): 298 - local sent (packets): 4 - local sent (bytes): 268 - local rcvd (packets): 3 - local rcvd (bytes): 298 - dropped ttl (packets): 0 - dropped ttl (bytes): 0 - failed writes (packets): 0 - failed writes (bytes): 0 - failed nhop (packets): 0 - failed nhop (bytes): 0 - -<no traffic on other qos cubes> -``` - -The above output shows the statistics for the data transfer component of -the IPCP that enrolled into the layer. It shows the time the flow was -established, the endpoint address and the number of packets that are in -the incoming and outgoing queues. Then it lists packet statistics per -QoS cube. It sent 4 packets, and received 3 packets. All the packets -came from local sources (internal components of the IPCP) and were -delivered to local destinations. Let's have a look where they went. - -``` -$ cat /tmp/ouroboros/ipcpd-normal.13569/dt/1 -Flow established at: 2018-03-07 18:47:43 -Endpoint address: flow-allocator -Queued packets (rx): 0 -Queued packets (tx): 0 - -<no packets on this flow> -``` - -There is no traffic on fd 0, which is the flow allocator component. This -will only be used when higher layer applications will use this normal -layer. Let's have a look at fd 1. - -``` -$ cat /tmp/ouroboros/ipcpd-normal.13569/dt/1 -Flow established at: 2018-03-07 18:47:43 -Endpoint address: dht -Queued packets (rx): 0 -Queued packets (tx): 0 - -Qos cube 0: - sent (packets): 3 - sent (bytes): 298 - rcvd (packets): 0 - rcvd (bytes): 0 - local sent (packets): 0 - local sent (bytes): 0 - local rcvd (packets): 6 - local rcvd (bytes): 312 - dropped ttl (packets): 0 - dropped ttl (bytes): 0 - failed writes (packets): 0 - failed writes (bytes): 0 - failed nhop (packets): 2 - failed nhop (bytes): 44 - -<no traffic on other qos cubes> -``` - -The traffic for the directory (DHT) is on fd1. Take note that this is -from the perspective of the data transfer component. The data transfer -component sent 3 packets to the DHT, these are the 3 packets it received -from the data transfer flow. The data transfer component received 6 -packets from the DHT. It only sent 4 on fd 65. 2 packets failed because -of nhop. This is because the forwarding table was being updated from the -routing table. Let's send some traffic to the oping server. - -``` -$ oping -n oping_server -i 0 -Pinging oping_server with 64 bytes of data: - -64 bytes from oping_server: seq=0 time=0.547 ms -... -64 bytes from oping_server: seq=999 time=0.184 ms - ---- oping_server ping statistics --- -1000 SDUs transmitted, 1000 received, 0% packet loss, time: 106.538 ms -rtt min/avg/max/mdev = 0.151/0.299/2.269/0.230 ms -``` - -This sent 1000 packets to the server. Let's have a look at the flow -allocator: - -``` -$ cat /tmp/ouroboros/ipcpd-normal.13569/dt/0 -Flow established at: 2018-03-07 18:47:43 -Endpoint address: flow-allocator -Queued packets (rx): 0 -Queued packets (tx): 0 - -Qos cube 0: - sent (packets): 1 - sent (bytes): 59 - rcvd (packets): 0 - rcvd (bytes): 0 - local sent (packets): 0 - local sent (bytes): 0 - local rcvd (packets): 1 - local rcvd (bytes): 51 - dropped ttl (packets): 0 - dropped ttl (bytes): 0 - failed writes (packets): 0 - failed writes (bytes): 0 - failed nhop (packets): 0 - failed nhop (bytes): 0 - -<no traffic on other qos cubes> -``` - -The flow allocator has sent and received a message: a request and a -response for the flow allocation between the oping client and server. -The data transfer flow will also have additional traffic: - -``` -$ cat /tmp/ouroboros/ipcpd-normal.13569/dt/65 -Flow established at: 2018-03-07 18:47:43 -Endpoint address: 465922905 -Queued packets (rx): 0 -Queued packets (tx): 0 - -Qos cube 0: - sent (packets): 1013 - sent (bytes): 85171 - rcvd (packets): 1014 - rcvd (bytes): 85373 - local sent (packets): 13 - local sent (bytes): 1171 - local rcvd (packets): 14 - local rcvd (bytes): 1373 - dropped ttl (packets): 0 - dropped ttl (bytes): 0 - failed writes (packets): 0 - failed writes (bytes): 0 - failed nhop (packets): 0 - failed nhop (bytes): 0 -``` - -This shows the traffic from the oping application. The additional -traffic (the oping sent 1000, the flow allocator 1 and the DHT -previously sent 3) is additional DHT traffic (the DHT periodically -updates). Also note that the traffic reported on the link includes the -FRCT and data transfer headers which in the default configuration is 20 -bytes per packet. - -This concludes tutorial 3. diff --git a/content/docs/tutorials/tutorial-4.md b/content/docs/tutorials/tutorial-4.md deleted file mode 100644 index fd7db3a..0000000 --- a/content/docs/tutorials/tutorial-4.md +++ /dev/null @@ -1,123 +0,0 @@ ---- -title: "Tutorial 4: Connecting two machines over Ethernet" -draft: false ---- - -In this tutorial we will connect two machines over an Ethernet network -using the eth-llc or eth-dix IPCPs. The eth-llc use of the IEEE 802.2 -Link Layer Control (LLC) service type 1 frame header. The eth-dix IPCP -uses DIX (DEC, Intel, Xerox) Ethernet, also known as Ethernet II. Both -provide a connectionless packet service with unacknowledged delivery. - -Make sure that you have an Ouroboros IRM daemon running on both -machines: - -``` -$ sudo irmd --stdout -``` - -The eth-llc and eth-dix IPCPs attach to an ethernet interface, which is -specified by its device name. The device name can be found in a number -of ways, we'll use the "ip" command here: - -``` -$ ip a -1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN -group default qlen 1 -link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 -... -2: ens3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast -state UP group default qlen 1000 -link/ether fa:16:3e:42:00:38 brd ff:ff:ff:ff:ff:ff -... -3: ens6: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast -state UP group default qlen 1000 -link/ether fa:16:3e:00:76:c2 brd ff:ff:ff:ff:ff:ff -... -``` - -The output of this command differs between operating systems and -distributions. The interface we need to use in our setup is "ens3" on -both machines, but for you it may be something like "eth0" or -"enp0s7f1" if you are on a wired LAN, or something like "wlan0" or -"wlp2s0" if you are on a Wi-Fi network. For Wi-Fi networks, we -recommend using the eth-dix. - -Usually the interface you will use is the one that has an IP address for -your LAN set. Note that you do not need to have an IP address for this -tutorial, but do make sure the interface is UP. - -Now that we know the interfaces to connect to the network with, let's -start the eth-llc/eth-dix IPCPs. The eth-llc/eth-dix layers don't have -an enrollment phase, all eth-llc IPCPs that are connected to the same -Ethernet, will be part of the layer. For eth-dix IPCPs the layers can be -separated by ethertype. The eth-llc and eth-dix IPCPs can only be -bootstrapped, so care must be taken by to provide the same hash -algorithm to all eth-llc and eth-dix IPCPs that should be in the same -network. We use the default (256-bit SHA3) for the hash and 0xa000 for -the Ethertype for the DIX IPCP. For our setup, it's the exact same -command on both machines. You will likely need to set a different -interface name on each machine. The irm tool allows abbreviated commands -(it is modelled after the "ip" command), which we show here (both -commands do the same): - -``` -node0: $ irm ipcp bootstrap type eth-llc name llc layer eth dev ens3 -node1: $ irm i b t eth-llc n llc l eth if ens3 -``` - -Both IRM daemons should acknowledge the creation of the IPCP: - -``` -==26504== irmd(II): Ouroboros IPC Resource Manager daemon started... -==26504== irmd(II): Created IPCP 27317. -==27317== ipcpd/eth-llc(II): Using raw socket device. -==27317== ipcpd/eth-llc(DB): Bootstrapped IPCP over Ethernet with LLC -with pid 27317. -==26504== irmd(II): Bootstrapped IPCP 27317 in layer eth. -``` - -If it failed, you may have mistyped the interface name, or your system -may not have a valid raw packet API. We are using GNU/Linux machines, so -the IPCP announces that it is using a [raw -socket](http://man7.org/linux/man-pages/man2/socket.2.html) device. On -OS X, the default is a [Berkeley Packet Filter -(BPF)](http://www.manpages.info/macosx/bpf.4.html) device, and on -FreeBSD, the default is a -[netmap](http://info.iet.unipi.it/~luigi/netmap/) device. See the -[compilation options](/compopt) for more information on choosing the -raw packet API. - -The Ethernet layer is ready to use. We will now create a normal layer -on top of it, just like we did over the local layer in the second -tutorial. We are showing some different ways of entering these -commands on the two machines: - -``` -node0: -$ irm ipcp bootstrap type normal name normal_0 layer normal_layer -$ irm bind ipcp normal_0 name normal_0 -$ irm b i normal_0 n normal_layer -$ irm register name normal_layer layer eth -$ irm r n normal_0 l eth -node1: -$ irm ipcp enroll name normal_1 layer normal_layer autobind -$ irm r n normal_layer l eth -$ irm r n normal_1 l eth -``` - -The IPCPs should acknowledge the enrollment in their logs: - -``` -node0: -==27452== enrollment(DB): Enrolling a new neighbor. -==27452== enrollment(DB): Sending enrollment info (47 bytes). -==27452== enrollment(DB): Neighbor enrollment successful. -node1: -==27720== enrollment(DB): Getting boot information. -==27720== enrollment(DB): Received enrollment info (47 bytes). -``` - -You can now continue to set up a management flow and data transfer -flow for the normal layer, like in tutorial 2. This concludes the -fourth tutorial. |